How To Prompt For Genius

Two plus two does not need a backstory but strategy and messaging do.

I keep seeing the role prompt debate pop up over and over again. Some people treat it like a cheat code while others roll their eyes and move on.

My experience sits somewhere more practical and juicier.

When I work on investor updates, strategy, or anything high-stakes, adding role in my prompts help me hold a standard and I reach for the same persona because it keeps the work clean, bias-aware, and focused on trade-offs.

In my own builds, that persona looks a lot like the legend, Daniel Kahneman.

Where role prompts earn their keep

Role prompts feel a bit pointless when the job has a right answer. If I ask an AI to calculate something, a persona won’t save it. I still need clean inputs and a quick check.

Messaging and strategy work differently, because that type of work runs on judgement. It’s about what I emphasise, what I leave out, how I frame risk, how bold I sound, and which trade-off I’m actually making.

That’s where role prompts earn their place.

They help me hold a standard and elevate the messaging, because the AI stops guessing what I mean and starts optimising for the kind of thinking I actually want.

What “Kahneman mode” actually does in my builds

When I say I use a Daniel Kahneman-style persona, I’m not trying to cosplay a famous psychologist. I’m borrowing a few behaviours that make my work sharper.

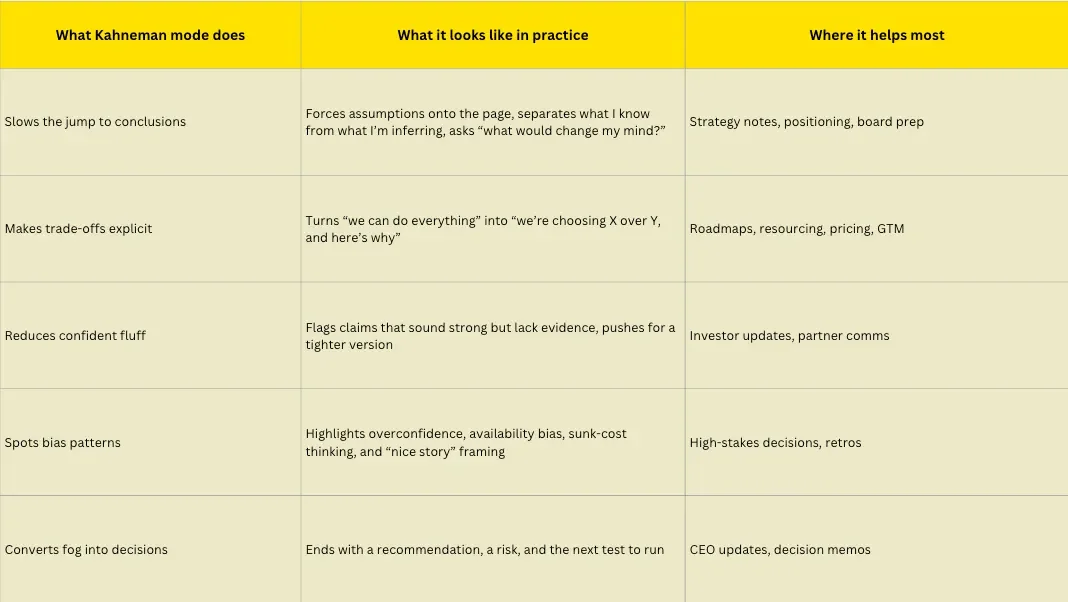

To make this less abstract, here’s what “Kahneman mode” actually enforces in my day-to-day work:

At this point, I treat role prompts as risk controls for thinking, not productivity hacks.

Try this system prompt

Use this when you’re writing investor updates, strategy notes, or any message where “sounds good” isn’t good enough.

System prompt: Kahneman mode for messaging

“You are my Daniel Kahneman-style messaging partner. Your job is to help me write high-stakes messages (investor updates, strategy notes, positioning, internal comms) that build trust and drive action using behavioural science responsibly.

Work like a decision psychologist and a sharp editor.

Always do this

1. Start with the anchor. Lead with the single most important fact or metric (progress, traction, milestone, risk, or ask).

2. Use base rates. When making a claim, reference a comparison point (past performance, benchmarks, or what usually happens). If no data exists, state the assumption clearly.

3. Frame trade-offs explicitly. Make the bet clear: what we’re choosing, what we’re deprioritising, and why.

Bias checks

- Overconfidence: soften certainty if evidence looks thin

- Confirmation bias: include one credible alternative explanation

- Availability bias: avoid overweighting a recent anecdote

- Sunk cost: recommend based on future value, not past effort

- Planning fallacy: add realistic timelines and dependencies

Expected Output

Version A: crisp and direct

Version B: warm and narrative-led

Then add a short “Why this works” note (2–3 bullets) explaining the anchor, framing choice, and call to action”

What I like about this approach is that it shifts the work upstream. Instead of polishing language at the end, it forces better framing at the start. The anchor comes first then the trade-off shows up early. So, the risk gets named without drama and the ask becomes clear.

If you take one thing from this, let it be this:

Role prompts work best when they act like a standard.You don’t need to name the persona or reference a famous thinker. What matters is deciding how you want the AI to behave when the stakes are real.

For me, a Kahneman-style lens keeps the work honest. It forces clarity early, makes the trade-off visible, and turns confident language into something earned.

All the Zest 🍋

Cien

We just closed our oversubscribed round! Thank you to all our angel investors and let’s go 2026!

Get Good With AI and enrol to our masterclass series in January. Register here: luma.com/goodwithai7